This week in San Jose, California, NVIDIA kicked off its GPU Technology Conference with a slew of announcements around its new virtualized GPU portfolio. As part of this media push, the company launched its NVIDIA GeForce GRID cloud gaming platform, “which allows gaming-as-a-service providers to stream next-generation games to virtually any device, without the lag that hampers current offerings.”

Looking to move market share away from the console gaming industry as well as bring new users into the fold, the company has already partnered up with a few cloud gaming providers.

Looking to move market share away from the console gaming industry as well as bring new users into the fold, the company has already partnered up with a few cloud gaming providers.

Today, most game developers rely on users to purchase a disc-based console or suitably-capable PC to supply processing power for their software. The landscape is changing, though, as companies like OnLive, Otoy and Gaikai have started offering cloud-based gaming services. The model is akin to streaming content through Netflix, and users can access the service through their existing computer, TV, tablet or smartphone.

Moving the heavy lifting to a datacenter adds device flexibility for end users, but it also introduces a major challenge for network-dependent gamers. Latency, or “lag” in gamerspeak, has the ability to ruin the multiplayer experience for online players.

The subject has drawn the ire of many gamers, especially those who play the very-popular first person shooter games. Let’s say one player shoots an automatic weapon at an online opponent and half the bullet spray appears to make contact, well due to lag, the server may only register one or two hits.

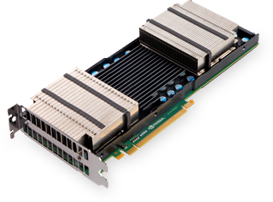

NVIDIA aims to allay these concerns by increasing processing speed and capacity available to cloud gaming providers. The GeForce GRID processors consist of two Kepler GPUs, each with its own encoder, outfitted with 8GB of VRAM and 320GB/s memory throughput. Each processor comes equipped with 3,072 CUDA cores capable of 4.7 teraflops of shader performance within a 250W TDP.

While first-generation cloud gaming servers relied on a single GPU per server, GeForce GRID servers enable up to four Kepler GPUs to be connected to each server.

The new processors employ advanced power management techniques to optimize performance per watt, an important metric for cloud-based providers. According to the official release, the GPUs minimize power consumption by simultaneously encoding up to eight game streams, allowing providers to support millions of concurrent gamers. On the server side, per-game energy requirements are cut in half.

NVIDIA claims the GeForce GRID platform can reduce game server latency to as little as 10 ms – the same delay as a local console – by capturing and encoding game frames in a single pass. During GTC, the technology was successfully demoed using only a TV, game controller and a network connection to Gaikai’s service.

The gaming industry has seen a recent drop in revenue, but still pulled in $17 billion in retail sales during 2011. That figure does not include the additional $7.24 billion gained from rentals, subscriptions, mobile and social game purchases. If NVIDIA can deliver on its latency claims, that second number could increase substantially. Taking direct aim at Sony, Microsoft and Nintendo, cloud gaming providers have made their platforms available on smart TV platforms, hoping to attract curious or casual gamers unwilling to invest in consoles.

Although GeForce GRID may not signal the end of console gaming, it certainly threatens to steal some of its thunder and possibly attract a new customer base for the gaming industry.