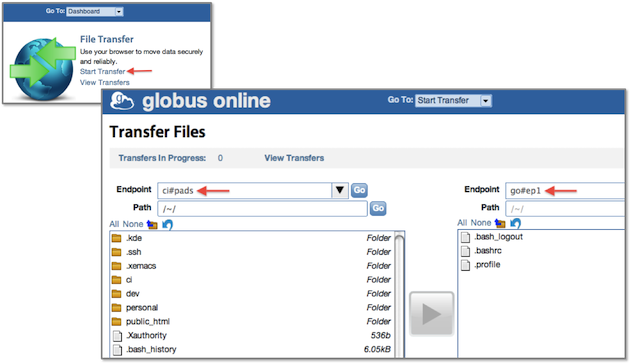

When Blue Waters comes online this year, researchers will be able to spend more of their valuable time on actual research, thanks in part to Globus Online, a cloud-based managed file transfer service. The National Center for Supercomputing Applications (NCSA) has selected Globus Online as the data movement solution for the Blue Waters supercomputer. Globus Online allows end users to move and synchronize files reliably between multiple locations without having to install and manage complex software.

Blue Waters is on track to becoming one of the world’s premier supercomputers with an expected peak performance of more than 11.5 petaflops. The powerful system will enable cutting-edge scientific breakthroughs in a broad range of disciplines, but first researchers need a reliable, secure, and preferably easy, way to move their data. That’s what Globus Online was made for, explains Steve Tuecke, co-PI for the Globus project at University of Chicago and Argonne National Laboratory’s Computation Institute.

Blue Waters is on track to becoming one of the world’s premier supercomputers with an expected peak performance of more than 11.5 petaflops. The powerful system will enable cutting-edge scientific breakthroughs in a broad range of disciplines, but first researchers need a reliable, secure, and preferably easy, way to move their data. That’s what Globus Online was made for, explains Steve Tuecke, co-PI for the Globus project at University of Chicago and Argonne National Laboratory’s Computation Institute.

While there are other file transfer tools out there, they are more targeted to the consumer space or commercial market, says Tuecke. What sets Globus  Online apart is that it’s geared toward the research world, where high-speed networks go hand-in-hand with complex security requirements. Globus Online caters specifically to the research community, and has targeted that audience every step of the way.

Online apart is that it’s geared toward the research world, where high-speed networks go hand-in-hand with complex security requirements. Globus Online caters specifically to the research community, and has targeted that audience every step of the way.

In light of this, it’s perhaps not surprising that when NCSA needed a data transfer solution for Blue Waters, Globus Online was their first choice. Traditionally, Globus would make the first move with prospective customers, but Tuecke said that in this instance, it was the other way around: NCSA reached out to them. Blue Waters, unbeknownst to Globus, had performed their own trial runs with the solution, liked what it offered, and went to Globus to work out a partnership.

Michelle Butler, NCSA technical program manager, shares a similar story. She notes that there are other solutions out there than can either transfer data or manage data, but not with the ease-of-use and reliability and security that Globus offers. According to Butler, when it comes to “a push-button, easy data transfer mechanism that uses the tools [they’ve] been using for years (Globus tools like GridFTP), nothing else compares.”

Butler explains that Globus Online will be used for transferring data into and out of the Blue Waters machine. The SaaS-based tool will also be tasked with moving data within NCSA, and into both the Blue Waters and NCSA archives. “Anywhere data needs to be transferred within NCSA or Blue Waters, it will be transferred across the Globus Online mechanism,” says Butler.

When asked about the unique requirements of Blue Waters, Butler cited the use of one-time passwords, an authentication method that relies on the use of key fobs. The file transfer service must also keep pace with Blue Waters’ powerful servers and interconnects, including 40 10 Gigabit Ethernet links, as well as an integrated file system capable of providing more than a terabyte-per-second of aggregate storage bandwidth. Globus Online is also supplying Blue Waters with a custom interface for a more seamless user experience.

Tuecke notes that satisfying the requirements of the Blue Waters project has contributed to improvements in the company’s entire software ecosystem including their original offering, Globus Toolkit. The latest release of which, Globus Toolkit 5.2, now comes with native packaging to support the various flavors of Linux.

The Globus Online service is based on the open-source Globus Toolkit, a fundamental enabling technology for grid computing. Developed and provided by the Globus Alliance, the popular middleware toolkit is used in HPC production grids worldwide. At the heart of Globus Online is GridFTP, a data transfer protocol optimized for high-speed wide-area networks. Although GridFTP is a highly-effective data transfer tool, it can be difficult to implement. Enter Globus Online, which delivers the same functionality in an easy-to-use format. It works across and between any servers that have the GridFTP software installed, but the underlying complexity is hidden from the user. “Under the covers,” says Tueke, “Globus online is really just a fancy client to our traditional Globus toolkit to FTP, but we’ve made it adoptable by any researcher out there.”

Security is another major feature of Globus Online. In a typical research environment, data needs to be able to cleanly traverse multiple security domains, so Globus created a technique for managing cross-organizational, multi-domain security environments. “We were able to do the big data with the cross-organizational security,” says Tuecke.

Globus Online made its debut in November 2010 at the Supercomputing Conference where it was also announced that NERSC (the National Energy Research Scientific Computing Center) had selected the file transfer tool. Since then, adoption has increased steadily. Globus Online is the data transfer tool of choice at a number of universities and research centers, including the UK National Grid Service.

Tuecke sees big opportunities for Globus Online going forward, perhaps even going beyond simple transfer to a more intensive management role. He believes the market for cloud services will continue to expand as the technology matures. And as cloud-based systems evolve to support a broader range of workloads, Globus’ mission is to make the process as easy as possible on the data side, says Tuecke.

As a final note, Tuecke says the software-as-a-service path is all about delivering needed capability in a fashion that is easily adoptable and employable by end users without having to install software and manage updates and so on. “Users just want to point their browser at a site and use the capability,” he says.